We know how to build robots. Tesla wants to ship a million Optimus units a year by 2030. 1X is targeting 10,000 humanoids. Figure, Apptronik, Unitree, the list grows monthly. The factories are ready. The supply chains are spinning up.

Simulation Falls Short

Whether any of this will work is genuinely unclear. But if it does, the bottleneck won't be hardware. The hardware is on its trajectory forwards. The software is not.

For years, the dominant theory was that simulation would get us to autonomy. Build a sufficiently detailed virtual world, train the robot in it, deploy.

It doesn't work. Or rather, it works until it doesn't.

Simulation can only model what you already know. A warehouse sim has clean aisles, consistent lighting, perfect pallets. The real warehouse has shrink wrap dangling off a shelf that LiDAR reads as a concrete wall. It has a forklift driver who cuts across your lane because he's running late. It has sun glare flooding through an open loading dock at 4pm.

A 1% failure rate in the lab is a rounding error. A 1% failure rate across a million deployed robots is 10,000 robots frozen in place, blocking aisles, dropping pallets, costing money and diminishing trust every second.

Simulation trains robots for the world as we imagine it. Teleoperation trains them for the world as it is.

Hardware vendors want to ship fast and reach scale fast. In robotics, that means shipping hardware before the autonomy is ready, then using real-world data to close the gap. The flywheel is simple: deploy, collect, train, redeploy.

Currently, this doesn't really work. Robotics requires a high degree of accuracy in real world use cases, and there's no system that can perform real world tasks accurately enough to be a good generalist. Most of these robots will ship and disappoint.

But here's the insight that changes the math: when a human teleoperates a robot, they're not just "driving." They're generating the most valuable training data in robotics.

A simulation-trained robot encounters something it's never seen and freezes. A teleoperated robot encounters the same thing, and a human solves it. That solution, the exact motor commands, the sensor readings, the context, becomes a permanent lesson. The fleet learns it once and never fails on it again.

Stanford's Mobile ALOHA showed that 50 human demonstrations are enough to teach a robot to cook shrimp. Toyota Research Institute taught robots dexterous manipulation in an afternoon. The pattern is clear. Human demonstration data is not a stopgap. It's the primary fuel.

This creates a compounding loop that simulation fundamentally cannot solve:

1. Robot encounters unknown scenario

2. Human takes over and solves it

3. Solution becomes training data

4. Model improves, fleet-wide

5. Next time, the robot handles it autonomously

6. Human moves on to the next unsolved problem

Every human intervention makes the entire fleet smarter. The hard problems get solved first because those are the ones that trigger interventions. The system automatically prioritizes what matters.

The Data Problem

Large language models work because language is surprisingly low-dimensional. Google Research estimated the intrinsic dimension of natural language at around 42, meaning the space of meaningful text can be compressed onto a manifold of roughly 42 independent axes, and we've saturated that manifold. The internet contains trillions of tokens of text. LLMs have essentially seen every way a sentence can be constructed.

The physical world is not like this.

A robot picking up a cup deals with friction coefficients, surface textures, liquid dynamics, grip pressure, wrist angles, ambient lighting, table height, cup weight, cup material, whether the handle faces left or right. Each variable interacts with every other. Millions of years of evolution has given us exceptionally strong priors for this. The intrinsic dimension of real-world manipulation tasks is almost certainly far higher than language, plausibly hundreds or thousands of independent axes.

And we have almost no data covering it.

There is roughly 100,000x more natural language data available on the internet than there is physical interaction data in all existing robotics datasets combined. The Open X-Embodiment dataset, the largest open robotics dataset ever assembled, representing years of work across 21 institutions, contains about 22TB. A single month of Reddit produces more text data than the entire history of robotics research has produced in demonstrations.

This is the fundamental mismatch. The manifold is larger. The data is 100,000x smaller. Simulation can't fill the gap because it only covers the subspace of scenarios we can imagine. The only way to cover the real manifold is to collect data in the real world, at scale, continuously.

One positive is that real world data, and especially human guided data, is likely far richer than text data can be. The fleet doesn't just collect more data, it covers more of the space. 50 demonstrations can solve a single task, entropy is easy to come by, at least for now.

If humanoids and other general purpose robots are to be operated in the world, they should be operated with human supervision. Currently that means teleoperation, but teleoperation has its own problems.

Quality Teleoperation Closes the Gap

You need people to teleoperate the robots. This is a major bottleneck if you want to scale deployment fast. At a 1-to-1 robot-to-teleoperator ratio, you'd need to hire somebody every time you wanted to sell a robot. This is obviously untenable.

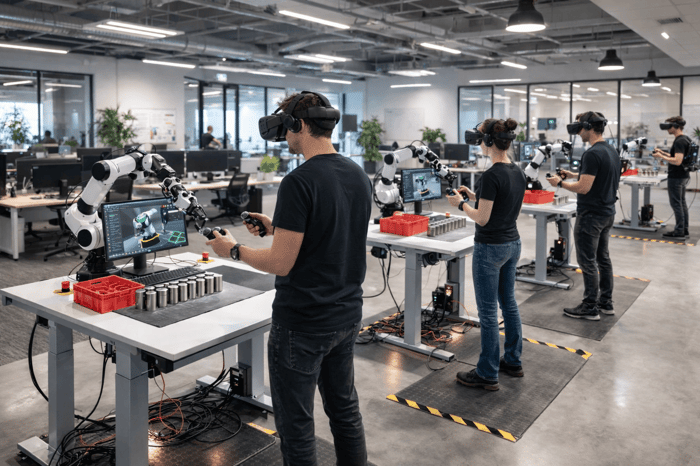

Teleoperation software needs to get better. High-latency VR streaming causes motion sickness and poor robot responsiveness. IK is poor. This leads to bad training data.

And the infrastructure to connect operators to robots at scale simply doesn't exist. Robot manufacturers build proprietary, siloed systems. Every vendor has its own control protocol, its own data format, its own fleet management dashboard. Those dashboards are passive they tell you something went wrong but give you no way to fix it remotely. The industry has observability without agency.

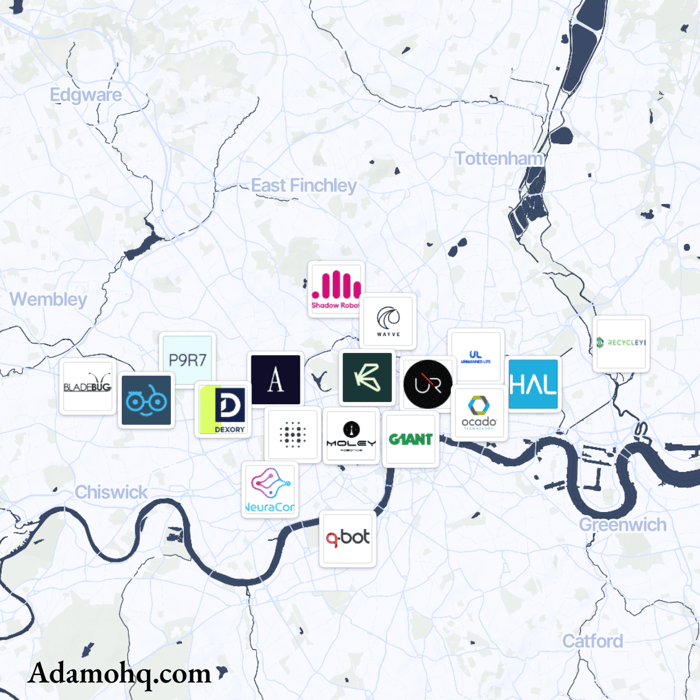

The premise of Adamo is to accelerate the scaling of robotics by allowing immediate deployment of robots to collect data in real world settings. Two components tackle the problems above:

A remote teleoperation service that hooks your robot up to teleoperators managed by us, so that robot companies don't have to scale their hiring at the rate that they sell robots. One protocol, any robot: quadrupeds, manipulators, humanoids, forklifts. No months-long integration. No vendor lock-in.

Low-latency, latency-robust teleoperation software that allows for the ergonomic collection of high-quality teleop data for imitation learning from a remote location. Latency is king, everything we build is in its service.

You ship the hardware. You connect it to Adamo. Humans handle what autonomy can't, yet. And every time they do, the autonomy gets better.

The robots that learn fastest will be the ones with the best human data pipeline. That pipeline is what we're building.