The simplest way to understand why remote teleop with a humanoid robot feels nothing like a Zoom call is to count the milliseconds.

A video call at 200ms is fine. People talk over each other once in a while, faces drift a hair out of sync with audio, nobody complains. Remote teleop at 200ms cannot pick up a coffee cup. The operator commands a pinch grasp, sees the result a fifth of a second later, overcorrects, watches the overcorrection arrive a fifth of a second after that, and ends up oscillating until the cup tips and lands on its side.

Manipulation has a latency cliff around 100ms, beyond which closed loop control becomes impossible for a human at the other end of the link. So when we engineer Adamo to drive well under that cliff, the target is doing real work for us. It is not a number we picked to look good in a deck. It is the line between a robot that ships into a customer’s warehouse and a robot that does great demos at conferences.

The question worth asking is where those milliseconds actually go, and why the easiest ones to lose are the ones a video call is happy to spend.

The full pipeline

A remote teleop session is a closed loop with two halves: the downlink from robot to operator, and the uplink from operator to robot. Each half has its own latency budget, and the two compound.

On the downlink, a photon hits a camera sensor. The sensor reads out a frame. A codec encodes it. The encoded frame is packetized, given headers, handed to the network stack, and shipped across whatever transport is available. At the operator end, the packets are reassembled, decoded, and rendered to a display. The operator sees the image.

On the uplink, the operator moves a hand or pulls a control. That input is sampled, packed into a control message, and shipped back. At the robot, the message is parsed, the command is dispatched to the motor controllers, and the actuators move. The world changes. The next frame is captured. The loop repeats, dozens of times a second, for as long as the session runs.

Glass to glass latency is the total time from photon to pixel on the operator display. End to end control latency is the time from operator input to robot motion. Both matter, and they stack.

Where the budget goes

In a typical remote teleop stack built on WebRTC, the budget for a single frame crossing a healthy network from California to Texas breaks down something like this:

Camera capture and sensor readout: 5 to 20ms. Depends on the sensor and how its rolling shutter behaves.

Video encoding: 10 to 30ms. H.264 at moderate complexity. More if the codec is running on CPU rather than a dedicated hardware encoder.

Packetization and OS network stack: 1 to 5ms. Usually small. Almost never the bottleneck.

Network transit: 20 to 60ms. Dominated by physical distance and router queuing. Light in fiber moves at about two thirds the speed of light in vacuum, which sets a hard floor near 15ms coast to coast in the US.

Receive side buffering: 30 to 100ms. This is where most stacks bleed time. Jitter buffers smooth out variable packet arrival but add latency the operator can never recover. Most WebRTC implementations target a buffer that prioritizes call quality over responsiveness.

Decoding: 5 to 20ms. Usually well behaved.

Render to display: 16ms or more. A 60Hz display gives you 16ms minimum, and a browser based operator UI adds another frame or two on top.

Add it up and a typical WebRTC stack lands at 150 to 250ms glass to glass. That is fine for a video call. It is catastrophic for a robot hand.

Peel back every avoidable layer and the picture changes. Sensor readout, transit, and display refresh are physics. Everything else is engineering, and engineering is negotiable.

A few of the moves that bring the floor down:

• A low latency codec, tightly configured and running on a hardware encoder, drops encode latency to single digit milliseconds.

• A buffering strategy that tolerates occasional frame drops, rather than buffering against jitter, drops receive side latency close to zero.

• A transport designed for control loops, rather than calls, removes the call quality overhead that WebRTC bakes in at every stage of the pipeline.

The honest physics floor for a continental remote teleop session is somewhere in the 25 to 50ms range. That is the territory we engineer toward, and the gap between that floor and a 200ms WebRTC stack is the gap we exist to close.

Control and video are not the same kind of data.

Here is the part most stacks get wrong. They treat video and control as the same kind of data and push both through the same pipeline.

Control commands are tiny. A pose update for a humanoid hand is a few hundred bytes. Video frames are large; a 1080p H.264 frame at moderate complexity runs 50 to 200KB. When a video frame and a control message queue behind each other, the control message inherits all the latency the video frame causes, which is exactly the worst case at exactly the worst time.

This is why our protocol routes control commands over a dedicated channel, isolated from video. When the network gets congested, the right behavior is for video to degrade gracefully, not for the robot to stop responding. A dropped frame is recoverable. A delayed command is not.

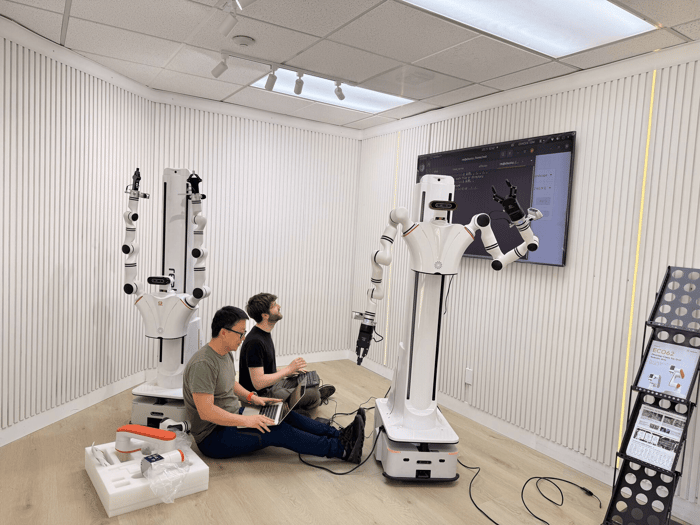

What Adamo does differently

We rebuilt the networking stack from the ground up for robotics, which sounds like a thing every infrastructure company says, but in our case it was the only honest path through the problem. The list below is what the protocol is designed to do, not a feature checklist we picked off a competitor’s pricing page:

• Glass to glass latency well below the WebRTC floor, with the gap widening sharply as network conditions degrade. The stack holds its responsiveness in exactly the moments where WebRTC stacks collapse.

• Control first architecture. Control messages travel over a dedicated channel and stay responsive when video bitrate has to adapt. The robot keeps moving.

• First class ROS and ROS2 integration. Drops into existing robot stacks without the months of glue code teams normally write to bridge their middleware to a video transport.

• Built for the hardware real robots ship with. NVIDIA hardware acceleration for capture and encode, on the kinds of edge devices that actually live on robots in the field.

• Encryption on every stream by default. TLS 1.3 as the floor, no plaintext path, no configuration required to turn it on.

The Bottom Line

Latency is not a single number. It is a budget you spend across capture, encode, transit, buffer, decode, render, command, and actuate. Most stacks treat each step as a black box and accept whatever overhead it adds. We treat the whole pipeline as one problem and optimize it end to end, because that is the only way to close the gap to the physics floor without cheating.

If you are building a humanoid, an AMR, or an autonomous vehicle, and your operators are losing tasks they should be winning, the place to look is the milliseconds. We are happy to run your stack against ours. Book a demo here.